We know better, but we do it anyway.

We know facial characteristics don’t reflect personality, but in less than a second, we will form opinions about strangers based on their looks.

In fact, our opinions of others say more about us than them, according to studies.

We tend to read personalities into face based on assumptions we’ve made or picked up from our social setting, according to Forbes Magazine. Research has shown that almost half these impressions are wrong. We shouldn’t trust these instant judgments of people based on their facial features.

But this wasn’t true for most of history. In ancient times, Chinese, Indian, and Greek scholars believed the face was an expression of the inner person.

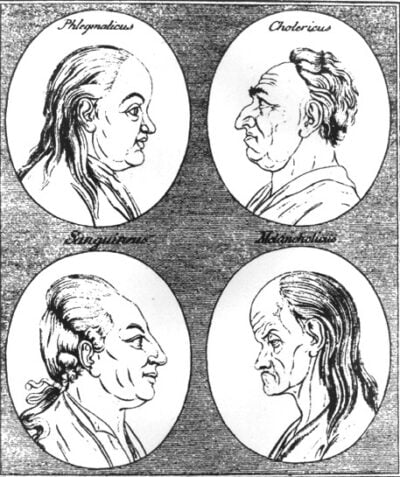

The idea persisted for millennia, and in the 17th century gained a sort of legitimacy from Giambattista della Porta, an Italian alchemist. He published a book that introduced the principles of physiognomy: reading someone’s character or temperament from their facial features. The book showed pairs of human and animal faces, suggesting that man and beast had similar characteristics.

Over two centuries later, Johann Lavater tried to tie character traits to the shape and setting of eyes, mouth, nose, and other facial features. Not surprisingly, he gave negative characteristics to people who didn’t look like his Swiss countrymen.

Around this time, scholars began theorizing that physical beauty reflected high moral character. And, conversely, that an ugly face revealed immorality.

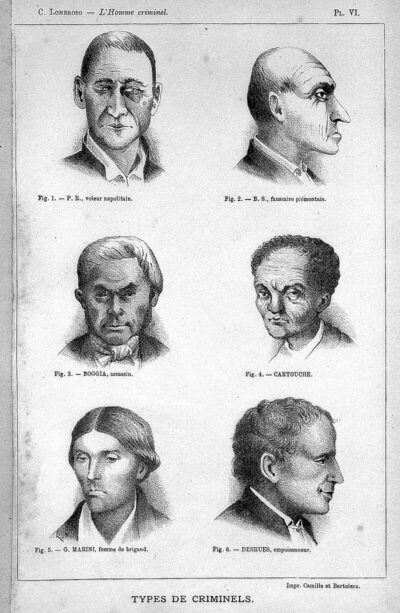

Cesare Lambroso took this idea further in the 19th century by arguing that criminals were less evolved human beings. Their faces would be asymmetric, with larger jaws, higher cheekbones, prominent brows over the eye, drooping eyelids, large teeth, and mismatched ears close to the skull.

Connecting criminality with appearance helped promote the idea of eugenics in the early 20th century. It advocated improving society by removing members of what some called inferior races.

But after World War II, Americans had seen the consequence of judging and eliminating people for their appearance. American foreign policy and the United Nations charter took measures to prevent similar actions in the future.

Now, unexpectedly, physiognomy is making a digital comeback.

Artificial Intelligence is being used to “read” faces and make judgments about people’s capabilities and temperament.

Researchers are already working on a program to detect which faces belong to convicted criminals, according to American Scientist. They’re also studying how A.I. can read personality traits in women that would interest men.

One company offers to identify pedophiles and terrorists by their faces. Another claims to detect emotions in people as they respond to advertisements. A human resources system is now employing A.I. to facial features and emotional expression to determine which applicants are the best fit for a job.

Another study is determining if computers can identify whether a person is gay or straight based on their photograph. It used the photos from dating sites of people who identified themselves as gay. Researchers had found that their algorithm could recognize “gay features” of people who said they were gay, but couldn’t tell if a randomly chosen face was of someone gay or straight. It’s important to know that researchers aren’t even sure what markers the program used to claim the photographed faces were gay.

Perhaps all these ventures into applying Artificial Intelligence to reading faces are just part of the enthusiasm that surrounds a new technology. But people are naturally concerned that A.I. might misattribute qualities to them that will seriously affect their legal and occupational status.

Maybe A.I. will make a true science out of physiognomy. But perhaps the human desire to quickly read and judge a people by their faces is, itself, a flawed idea.

Become a Saturday Evening Post member and enjoy unlimited access. Subscribe now

Comments

I agree,Bob,AI should be disregarded except for science studies

There was an episode of “Have Gun – Will Travel” which was based after a scientist traveling with Paladin (Richard Boone) set out to measure dimensions of a criminal’s skull in an effort to support his theory that criminals had certain skull measurements. Of course, the episode was a fiction. However, I understand there was indeed such a researcher back then. I personally discount the theory as rubbish. Furthermore, AI results will also be rubbish and cannot be counted on for accuracy. Remember AI was a product of human innovations including hardware and software, programs and data. If there’s a bug in your your program, the results are skewed. If there’s inaccurate information in your data being fed into the program, the results will be skewed too. Remember the old adage “Garbage In. Garbage Out” and go with that.

AI will have its purposes and many uses which are yet to be tapped. It’s still evolving with a long way to go. Will it ever be perfect? Likely not. Right now one needs to be cautious when trying to use it because it still is in its “Wild, Wild West” infancy. I do see a future use with AI in the Financial World once it is more refined. But that may be some years away from now.

Can anyone remember how it was back in the 1970s & 1980s when the Data Processing and Computer Science degrees and jobs were in demand? Now, take a step or two back and correlate that history to what is happening with AI right now. I think you’ll see where I am coming from.

As stated in the last paragraph, this IS a flawed idea. The temptation to let and trust ‘AI’ to do the work here via hi-tech short cuts is understandable. Sometimes (I would think) the AI would ‘nail it’ correctly, others partially right in some aspects, wrong in others. Still other times, completely wrong.

This isn’t to say you can’t judge someone by the vibe they give off as being a jerk, nasty or dangerous and not be correct. These people can ‘look’ or ‘seem’ nice in a still image, but once in their presence, a very different story. Same thing with less attractive people erroneously being perceived in a negative manner, when the opposite is true.

In both cases what we perceive initially with nothing else to go on can be accurate or inaccurate. For AI to “weed out” people based on a likely falsehood is unfair (especially in hiring) to the people hired or rejected on that basis only. Not even getting a face to face interview to either pass or be passed over, is pretty lousy. It’s a slippery slope we’ll have to see how it plays out in the coming years, or not.