Mrs. Caraway Goes to Washington

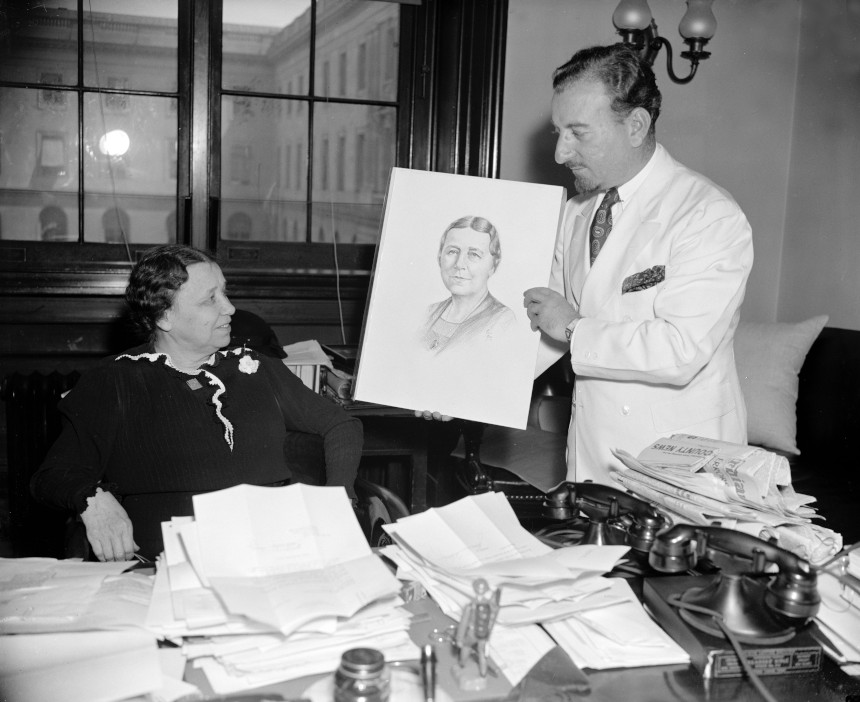

The first woman elected to the U.S. Senate is not a household name. That woman, Hattie Wyatt Caraway of Arkansas, kept a very low profile. She is not considered a political trailblazer. Indeed, she voted with the rest of the Southern delegation against the Anti-Lynching Bill of 1934, intended to make lynching a federal crime. But though her name has become a historical footnote, Caraway, who was born in the shadow of post-Civil War Reconstruction and died at the dawn of the atomic age, offers a fascinating study in the challenges facing women in American politics.

Caraway won a special election to the Senate in 1931 after the death of her husband, Democratic Senator Thaddeus Caraway. This was not without precedent for a politician’s widow, because the move allowed the “real candidates” — the men — time to prepare their campaigns. But Caraway, at age 54, shocked the powers-that-be by announcing her plans to run in the 1932 general election.

In making the decision to run for a full term in the Senate, she knew she was breaking new ground for women. Until Caraway’s election, only one other woman had even set foot in the Senate, the self-styled “World’s Most Exclusive Club”; in 1922, 87-year-old Rebecca Felton had been allowed to sit in the chamber for a day as reward for her political contributions in Georgia. Though the 19th Amendment guaranteeing American women the right to vote had been ratified, the idea that government was a man’s sphere had not changed much since abolitionist and women’s rights advocate Sarah Grimké visited the U.S. Supreme Court years earlier in 1853. After Grimké was invited to sit in the Chief Justice’s chair, she stated that someday the seat might be occupied by a woman. In response, “the brethren laughed heartily,” she later recounted in a letter to a friend.

A widespread criticism of Caraway as she entered the 1932 campaign was that she was needed at home to take care of her children, even though they were already grown.

But in 1932, the year the Dow Jones hit its lowest point during the Great Depression, few Americans were laughing. Caraway, a small woman who always dressed in black after her husband’s death, could have easily passed as someone’s visiting grandmother in the halls of the Capitol. Her only previous elected experience was serving as the secretary of a small-town women’s club. But desperate constituents in Arkansas didn’t seem to care who she was, as long as she could help them.

Letters poured into Caraway’s Senate office, pleading for relief. As she read them and observed the action — or inaction — of fellow senators, she came to a startling revelation: Wasn’t a person — any person, even a woman — of moderate intelligence who worked hard, cared about average people, and stayed awake at their Senate desk (many colleagues didn’t) just as useful as those who made bombastic speeches?

Caraway’s three sons, West Pointers and high-ranking Army officers, supported her political aspirations. As she wrote in her journal entry of May 9, 1932, the first time she announced her decision to run: “Well, I pitched a coin and heads came three times, so because [my sons] wish and because I really want to try out my own theory of a woman running for office, I let my check and pledges be filed. And now won’t be able to sleep or eat.” Within two days’ time, though, Caraway seemed to have made peace with the decision, writing in her journal that she would “have a wonderful time running for office” if she could hold on to her dignity and sense of humor. During the campaign, she would need both.

A widespread criticism of Caraway as she entered the 1932 campaign was that she was needed at home to take care of her children, even though they were already grown. Sexism aside, Caraway’s campaign faced another problem: She had no money to run. But she did have a good friend in the flamboyant Louisiana politician Huey Long.

Long genuinely liked Caraway after spending time sitting next to her in the back row of the Senate, and he didn’t have much to lose by throwing his support behind her. Even if Caraway lost, it would not really be considered his fault since the odds were against her winning anyway. But if he were to help her get elected, he would emerge as a political miracle worker, and maybe even secure a rubber stamp for his pet programs. At the very least, he’d gain some ground in his power struggle with Arkansas’s other Senator, Joe T. Robinson.

With Long’s support, Caraway did win the 1932 election for a full term in the Senate, earning more total votes than all six men who ran against her put together. Some political observers said she would not have won without Huey’s help, but she notably defeated her opponents in counties where she and Long did not campaign.

In office, Caraway was dubbed “Silent Hattie” because she rarely delivered the thunderous orations her male colleagues were known for. It should be noted that there were male senators who declined to make speeches as well, but it was Caraway whose silence was labeled.

If she was quieter than most, her record spoke for her. As a senator, she worked tirelessly, earning a reputation as an effective public servant in the troubled times of the Great Depression and World War II. A saying among Arkansans became, “Write Senator Caraway. She will help you if she can.”

Caraway did her best work in committee, where she could speak normally without having to orate from the well of the Senate chamber. In this small setting, she could work to convince others of what average people needed, like flood control. She had seen the devastation in her state when roaring waters washed away entire communities during the Great Flood of 1927, the most destructive flood ever to hit the state and one of the worst in the history of the nation.

In office, Caraway was dubbed “Silent Hattie” because she rarely delivered the thunderous orations her male colleagues were known for.

When Caraway first ran for office, she ran on the premise that the nation could be served by an average person who knew the price of milk and bread, and who remembered there were people who had none. She walked her talk, carrying her lunch in a brown paper bag, and starting each day by reading every word of the Congressional Record. She never missed a Senate vote or a committee meeting. Nor did she take time away from Congress to campaign, as others did.

In time, she proved to herself that she had a mind as good as anyone’s in the Senate. While serving on the Senate’s Agriculture Committee, for instance, something that was important to her rural state, she realized that she knew firsthand about issues that affected farmers more than what she called in her journal the “manicured men” of the Senate.

The voters of Arkansas, in turn, stood by her. Caraway was reelected in 1938, defeating a strong candidate whose campaign slogan boomed “Arkansas Needs Another Man in the Senate!” She therefore became not only the first woman to be elected to the Senate, but also the first to be reelected. Among her many firsts serving in the Senate from 1931 to 1945, she also became the first to preside over the Senate, chair a Senate committee, and lead a Senate hearing.

But it wasn’t easy being the only woman in the room. Caraway was often ignored by her fellow senators, and she had no mentor. Her position must have often felt insecure. Arkansas’s other senator, the powerful Joe T. Robinson, had very few interactions with her. She presumed it was because he was waiting for a “real” senator — a man — to arrive. Several of her journal entries reflect their terse relationship. On January 4, 1932, soon after Caraway entered the Senate, she wrote that Robinson “came around only for a moment at the instigation” of his chief-of-staff. On May 19, 1932, Robinson inspired her to write, “I very foolishly tried to talk to Joe today. Never again. He was cooler than a fresh cucumber and sourer than a pickled one.” Reflecting on their strained relationship, she once poignantly confessed in her journal, “Guess I said too much or too little. Never know.”

If she felt invisible in the Senate chambers, she was very aware that the press held her in the spotlight. At one point, she wrote in her journal, “Today I almost made the front page as I lost the hem out of my petticoat.” But Caraway didn’t view herself as a firebrand. Rather, she conducted herself as she believed a “Southern lady” would do. In her journal, she notes that she did not appreciate when a reporter “pushed into” her office. “I was terribly indignant,” Caraway wrote. “She got no interview.”

Still, in 1943, she notably cosponsored the Equal Rights Amendment, a piece of legislation that had already been introduced in Congress 11 times and failed each time. The proposal simply read, “Equality of rights under the law shall not be denied or abridged by the United States or by any State on account of sex.”

Caraway was also one of the sponsors of what has been called the most momentous piece of legislation in American history, the Servicemen’s Readjustment Act of 1944, popularly known as the GI Bill. In doing so, she put herself up against powerful congressmen who condemned the bill for being “socialist.” But Caraway, who came from a struggling farm family herself (she had only been able to go to college thanks to the generosity of a maiden aunt), firmly supported the piece of legislation, which contained educational benefits for World War II veterans. She saw it as a manifestation of her belief that education was the key to progress.

By 1944, however, Caraway’s style no longer resonated with voters in the same way. During her bid for reelection, she placed fourth in the Democratic primary. The winner, J. William Fulbright, a wealthy former Rhodes scholar, went on to secure the general election and would serve as a powerful force in the Senate for the next 30 years.

On Caraway’s final day in the Senate in 1945, her years of service earned her the rare standing ovation by her all-male colleagues. Yet, even as they honored her, a statement — which was no doubt meant as a compliment — offers a telling window into what it was like to be the first. “Mrs. Caraway,” one of the men proclaimed, “is the kind of woman senator that men senators prefer.”

More than a half-century after Caraway’s death in 1950, the challenging reality of her precarious position as the first woman to break into the “World’s Most Exclusive Club” still resonates. A few years ago, I was sitting next to Arkansas Senator Blanche Lincoln at a speaking engagement when the Senator opened her daily planner. Inside the front cover, where she would see it immediately, Senator Lincoln had written the words Caraway had written in her diary when she decided to run for the first time: “If I can hold on to my sense of humor and a modicum of dignity I shall have a wonderful time running for office, whether I get there or not.”

Nancy Hendricks writes extensively on women’s history and is the author of Senator Hattie Caraway: An Arkansas Legacy.

Originally Appeared at Zócalo Public Square

This article is featured in the November/December 2020 issue of The Saturday Evening Post. Subscribe to the magazine for more art, inspiring stories, fiction, humor, and features from our archives.

Featured image: Library of Congress

How Nebraska Farmer Luna Kellie Was Radicalized Before the 1896 Election

In the late 1800s, one Nebraska farmer was beleaguered by an unruly farm, ten children, corrupt bankers, and indifferent politicians. She could have given up. But instead, Luna Kellie did something about it.

For most members of the Nebraska Farmers’ Alliance, a political advocacy group, the work of organizing occurred solely during the Alliances’ meetings and events. Yet for Luna Kellie, the Alliance’s secretary, the work often continued until the wee hours of the morning. As she later wrote in her memoirs, “if I had extra time when others were asleep, I would devote it to writings for the papers.” Kellie’s work for the Farmers’ Alliance eventually drove her to become a leader in the larger populist movement that swept through the U.S. during the 1890s. Yet even as she took on more responsibility, her political life and home life continued to intersect in the same way they had during those late night writing sessions. While Luna Kellie is far from being the most famous of the populist leaders, hers is the story of a woman driven to political action by personal hardship, making it particularly emblematic of the American political climate in the lead up to the pivotal 1896 presidential election.

St. Louis was a crowded town in 1876, and both Kellie and her husband J.T. felt that their dream for a safe and prosperous life was becoming more and more improbable in the industrializing city. So when the couple read a railroad advertisement for cheap homestead mortgages in Nebraska, they jumped at the opportunity. As Kellie wrote, “It seemed to me a great chance to see a beautiful country like the pictures showed and have lots of thrilling adventures.” A new life on the prairie also carried with it the promise of financial stability, a safe home, and a happy family. But in reality, Kellie’s new life was anything but the Little House on the Prairie. Instead, the newly Nebraskan farmer found that her farm’s success and her family’s well-being were at the whims of railroad magnates, cutthroat capitalists, and financiers in faraway states.

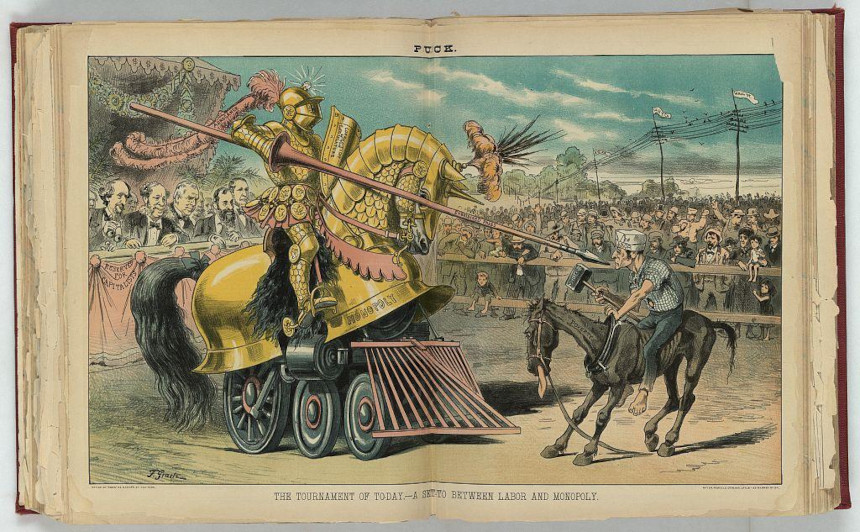

The decades after the Civil War promised a better life. Yet while the end of the 19th century was a time of widespread expansion, it was a time of even more widespread corruption. For freed African Americans, this corruption came in the form of Jim Crow regimes and new systems of oppression. For immigrants, it was political machines and decaying slums. For farmers — such as Kellie — corruption was apparent in the practices of railroads and banks that jockeyed for economic dominance over the vast expanses of the America west.

The end of the 19th century is called the Gilded Age on account of the decadence exhibited by urban capitalists such as Andrew Carnegie and John Rockefeller, yet this same monopolistic impulse extended across the country. Kellie was quick to point out how such corruption was at play in Nebraska, writing that

even after they [the railroads] had made 4 times the necessary ‘expenses’ had they [only] been content with a profit of 1 million a month instead of 2. If they knowing the farmers were raising their stuff for them below cost of production had said we will divide the profit on each haul of wheat and corn each carload of stock with you we would not only [have] been enabled to keep our place and thousands more like us but would have been enabled to live in comfort and out of debt.

One bad harvest was all that stood between most farmers and financial ruin, a fact that hung like a shadow over Kellie and her family. Midwestern homesteads were plagued by environmental hazards, ranging from crop-eating grasshoppers to tornadoes and disease. Kellie experienced all of these trials, with the extra challenge of raising ten children at the same time. Additionally, the mortgage on Kellie’s homestead was handled by an apathetic bank in distant Boston. As the bank continued to raise interest rates, Kellie and her family faced the very real possibility of losing their land entirely. To combat this fear, she began selling vegetables in nearby towns as a source of supplementary income, yet she knew that quick cash was not enough to remedy the challenges her family faced. She had realized that her failure to find success and happiness in Nebraska was the result of an economic system that disempowered prairie farmers for the betterment of railroads, banks, and the bottom line. And so Kellie turned her energy towards organizing.

Farmers around the country were beginning to identify the systematic roots of their poverty (including Kansas farmers who eventually brought their issue to the House of Representatives). However, both major parties were controlled by northern industrialists who had no inclination to support homesteaders. As a result, the populist movement emerged in different pockets throughout the country, eventually coalescing as a third-party option to fight for the livelihood of farmers.

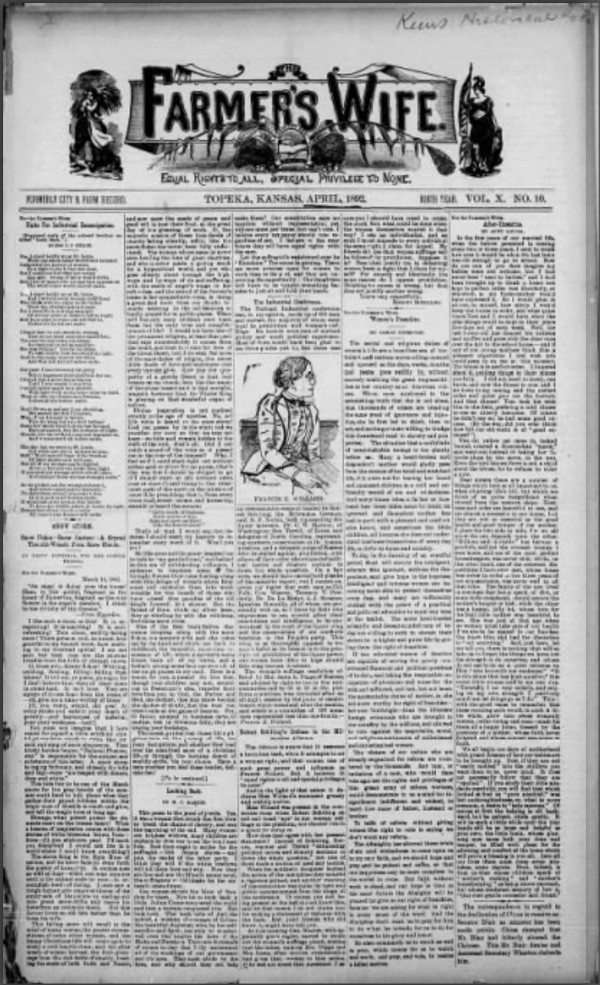

Though it may not have been clear at the time, Kellie was at the forefront of the populist movement in Nebraska. Both she and J.T. were members of the Nebraska Farmers’ Alliance, an organization that held town hall meetings and created literature that offered Nebraska homesteaders a voice in national politics. However, Kellie insisted that “the alliance at this time was not supposed to be political.” Instead, it was simply defending the work and lives of prairie farmers.

Still, Kellie continued to become more involved (much more than her husband) in the Farmers’ Alliance. She was elected as the organization’s secretary and took on the job of editing the Alliance’s newspaper, The Prairie Populist. In the paper, Kellie published biting condemnations of the railroads, bankers, and economic practices that had caused her family so much hardship. Her political awakening also extended beyond the needs of farmers. Kellie’s agency in the Farmers’ Alliance made her increasingly adamant about the need for women’s suffrage, and she soon took on the additional task of traveling to conventions around the country to fight for the vote. As The Scranton Tribune noted of one of her speeches, she “exerted her influence in favor of universal suffrage, undaunted by the fact that she was obliged to carry her baby with her during the long journey.” Kellie took on all of these new responsibilities while continuing to work on the homestead and raise her children, a feat that only strengthened the intensity of her political engagement.

As she traveled, Kellie earned a reputation for her fiery speeches. She was a songster, a type of public speaker who wrote political lyrics to well-known folksongs and would intersperse bits of verse throughout addresses. Luna traveled throughout Nebraska and its bordering states, engaging audiences of like-minded farmers with her urgent, song-infused speeches. The most notable of Kellie’s addresses was her “Stand Up for Nebraska” speech, which featured passionate lyrics about the deplorable practices of business monopolies and the financial security that Nebraska’s land should have provided:

Stand up for Nebraska! and shame upon those

Who fear the extent of their steals to disclose.

Who say that she cannot grow wealth or create;

But must coax foreign capital into the state.

Such insults each friend of the state deeply grieves:

Stand up for Nebraska and banish her thieves.

Through the Farmers’ Alliance, Kellie found her political voice to speak against the “foreign capital” of railroad companies and the larger wealth gap that was present throughout the United States. However, as the populist movement ballooned into a national third party, Kellie was quick to realize that the local roots and honest message of the Farmers’ Alliance was being corrupted. In the lead-up to the 1896 presidential election, the wildly successful populist candidate William Jennings Bryan agreed to combine forces with the Democratic Party and run as their candidate. So-called “fusionists” were in favor of this decision. People like Kellie, who believed that the populist movement’s central mission of helping farmers was being betrayed, did not.

Kellie continued organizing for the Farmers’ Alliance, but her premonitions were partly realized. Bryan lost the 1896 presidential election, and soon thereafter the Populist Party lost steam. It was perhaps the movement’s only chance at success on a national scale, and it was squandered. The community organizing that was the bread and butter of the populist movement faded into obscurity, and soon enough the Nebraska Farmers’ Alliance also disbanded. Kellie continued speaking at conventions and fighting for women’s suffrage, but her political fervor waned.

Kellie continued working on her homestead, and many years later wrote a memoir for her family (which has since been published and serves as the principal source for this article). Quite poetically, she scribed the story of her life on the back of unused Farmers’ Alliance certificates. In its closing pages, Kellie offers a disillusioned soliloquy to the results of her political work. She writes, “And so I never vote [and] did not for years hardly look at a political paper. I feel that nothing is likely to be done to benefit the farming class in my lifetime. So I busy myself with my garden and chickens and have given up all hope of making the world any better.”

It is a heartbreaking sentiment coming from a woman who contributed so much to the populist movement, yet even Kellie’s own words might fail to capture the full scope of her impact. Luna Kellie’s goal was never to have a career in politics; still, she found widespread acclaim as a speaker, writer, and organizer. She was driven to politics by personal hardships, and even though the main cause she fought for may not have found success, she did succeed at giving a voice to the great number of people who faced problems similar to hers.

Featured image: Library of Congress

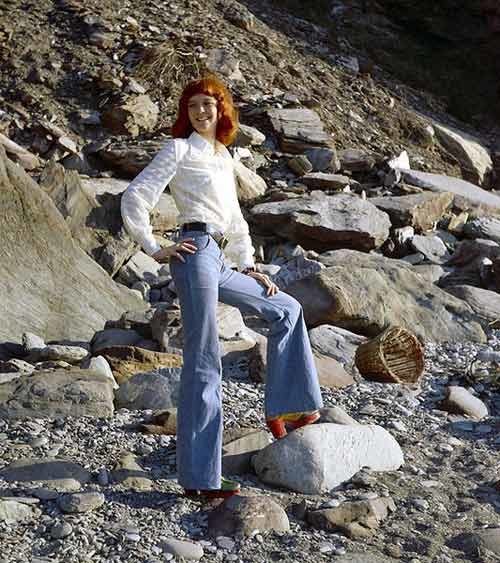

The Mary Tyler Moore Show Remains a Television Landmark

It’s easy to say that a show redefined television, but it’s much harder to prove. In the case of The Mary Tyler Moore Show, you might say that the proof is all around. The series recreated the mold of the ensemble comedy. It changed the way that comedy shows were directed. It had a cast that was strong enough to spin off three separate characters into their own series (one of which was a drama!). And it wasn’t afraid to engage in very serious topics, including some that were taboo for the time. The show still shows up on “Best of” lists, including best writing, acting, and direction, as well as Best Finale and Funniest Moment (seriously, the Chuckles funeral). It’s a program where everything still holds up remarkably well five decades later. That’s right; The Mary Tyler Moore Show launched 50 years ago, and TV is much better because of it.

Series co-creator Allan Burns started writing in animation, working on Jay Ward productions like The Rocky and Bullwinkle Show. He co-created The Munsters and worked as a story editor on Get Smart. James L. Brooks broke into TV news in the 1960s on the writing side. Brooks met Burns at a party, and Burns got him TV writing work. After working on several shows, Brooks created Room 222; when he left after the first year to develop other projects, he got Burns to come aboard as producer. Soon after, Grant Tinker, a programming executive at CBS, hired the duo to create a show for his wife. His wife happened to be Mary Tyler Moore, who was already beloved and famous for her long-running role as Laura Petrie on The Dick Van Dyke Show. Leaning on Brooks’s background, they decided to build the show around the goings-on in a metropolitan TV newsroom with Moore’s Mary Richards as the associate producer at the center.

Mary Tyler Moore on casting (Uploaded to YouTube by FoundationINTERVIEWS)

The nucleus of the newsroom cast was Moore, Ed Asner, Gavin MacLeod, and Ted Knight; Valerie Harper and Cloris Leachman played Mary’s best friend, Rhoda, and neighbor, Phyllis, respectively. Over the years, as Harper and Leachman left for spin-offs devoted to their characters, the cast would add Georgia Engel and Betty White to great effect. The Mary Tyler Moore Show managed to be both entertaining and relevant. Mary Richards was single throughout the tenure of the show, and not forcing the character to be defined by a man or relationship was groundbreaking. Similarly, Rhoda grappled with body image issues. No character was one-note; even Ted Knight’s Ted Baxter, for his incompetent bluster, had moments of humanity and deepened further when Engel’s Georgette was added to the cast as his wife. The shading of each character, rather than simply assigning a type and relying on it, became a sitcom staple.

While the actors made it all work, the writing and directing had a great deal to do with it. Brooks would apply the dynamics of ensemble building to shows like Taxi and The Simpsons. When you watch an episode like “Chuckles Bites the Dust,” you’re witnessing something akin to an eight-sided tennis match; each character is bouncing jokes and responses back and forth, but the jokes are all rooted in that particular actor’s character. When Mary can’t control her giggles during the clown’s funeral, and later bursts into tears, it’s funny because a) it’s funny, b) it’s believable human behavior, c) it’s all true to what we know about Mary, and d) it’s deeply relatable. If you’re thinking that the same principles apply to many episodes of the show, you’d be right.

Another important facet of the show was the fact that it didn’t turn its back on difficult topics in society. Like the aforementioned personal struggles that Mary and Rhoda had, characters faced personal difficulties or were involved in plots that brought up issues of the day (and today). One memorable moment came in the episode “You’ve Got a Friend;” when Mary’s visiting mother told Mary’s father not to forget to take his pill, he and Mary both replied, “I won’t,” implying, of course, that Mary was on birth control. The show also addressed equal pay for women and many more storylines that remain relevant today.

As the show went on, appreciation for it grew. It pulled in 29 Emmys, including three for Outstanding Comedy Series in 1975, 1976, and 1977. Moore also won three times for Outstanding Lead Actress in a Comedy Series. It was a solid Top 20 entry in the ratings for most of its run, with three years spent in the Top Ten. When the show received a Peabody Award in 1977, it came with the state that the show had “established the benchmark by which all situation comedies must be judged.” Since the show’s end after its seventh and final season, it has routinely placed on lists recounting the best in television, including lists from TV Guide, Entertainment Weekly, and USA Today. In 2013, the Writers Guild of America put it number six on their list of the best written television series of all time.

After the series ended in 1977, a third spin-off, Lou Grant, was launched. Ed Asner led the series for five seasons, during which it won 13 Emmys, two Golden Globes, and its own Peabody. Plans were made for Mary and Rhoda to reunite in a sitcom; however, those were later abandoned. Mary and Rhoda did meet up again in the 2000 TV movie Mary and Rhoda. A 2002 reunion special brought the entire surviving cast back together (Knight had passed in 1986) for a retrospective look at the series. Today, all seven seasons are available to watch on Hulu.

After the series, Moore worked continuously across film, television, and theater. She earned an Oscar nomination for her role in 1980’s Ordinary People. She and Tinker divorced in 1981, and she married Robert Levine in 1983. A type 1 diabetic, Moore served for years as the international chairperson for the Juvenile Diabetes Research Foundation. She was also active in animal rights and in the restoration and preservation of Civil War history. Moore passed way in 2017 at the age of 80.

The Mary Tyler Moore Show left an indelible mark on television. You can see its DNA in everything from Cheers to Friends to The Office. Any show with a workplace at the center is bound to be compared to it, and any show that features adults talking to each other like adults recalls its boldness. Few shows are daring enough to make you laugh at a clown’s funeral; far fewer could make it one of the most memorable scenes in TV history. A classic by any measure, the show’s impact likely never go away. It certainly made it, after all.

During the run of the show, the Post went behind-the-scenes with Moore in a wide-ranging interview from 1974. You can read that story below.

Featured image: Cast photo from the television program The Mary Tyler Moore Show. After the news that most of the WJM-TV staff has been fired, everyone gathers in the newsroom. From left: Betty White (Sue Ann Nivens), Gavin MacLeod (Murray Slaughter), Ed Asner (Lou Grant), Georgia Engel (Georgette Baxter), Ted Knight (Ted Baxter), Mary Tyler Moore (Mary Richards). (Publicity Image from CBS Television; Public Domain via Wikimedia Commons)

Review: Radioactive — Movies for the Rest of Us with Bill Newcott

Radioactive

⭐⭐⭐⭐

Rating: PG-13

Run Time: 1 hour 49 minutes

Stars: Rosamund Pike, Sam Riley, Yvette Feuer, Aneurin Barnard

Writer: Jack Thorne

Director: Marjane Satrapi

Streaming on Amazon Prime

Tackling the life story of pioneering nuclear scientist Marie Curie, Rosamund Pike continues her recent explorations of tough-to-pin-down historic women — pithy females who tossed aside their cultures’ expectations and plunged stubbornly forward, either failing to hear the cries of objection or simply choosing to ignore them.

In A United Kingdom she played Ruth Williams, a London woman who defied the social norms of two countries when she married an African king in the late 1940s. She chain-smoked and growled her way through A Private War, painting an uncompromisingly coarse portrait of war correspondent Marie Colvin. And here in Radioactive, playing the Mother of the Atomic Age, Pike strikes yet another defiant pose as a woman who, despite her obvious brilliance, battles at every turn to make her mark in the male-dominated scientific world of the early 20th century.

It’s a startling performance that commands virtually every moment of the film’s run time, as Pike’s Curie runs into one institutional blockade after another.

Unmarried and fighting to keep her position at a Paris laboratory, in one early scene Curie storms into an all-male (of course) board meeting to demand more lab space — and ends up fired. Facing professional ruin, Pike’s face swims with conflicting emotions: fury, surprise, hurt, and dread fear. But even as her expressions flit from one state of mind to another, she seems to grow in stature to the point where the guys with the cigars — and we — begin to wonder if she’s going to leap at them from across the conference table.

So prickly is Pike’s Curie that we almost gasp in astonishment when she lowers her stoic resolve long enough — but just barely so — to fall in love with and marry Pierre Curie, an uncommonly open-minded fellow scientist (Sam Reilly, channeling the same suave charm that made him the perfect alt-world Mr. Darcy in 2016’s Pride and Prejudice and Zombies).

Iranian director Marjane Satrapi — Oscar-nominated for her animated film Persepolis — knows she’s got a good thing going in Pike. When’s she’s not simply turning the whole film over to her star she’s taking devilish delight in depicting the world’s turn-of-the-century radiation mania — depicting with dark glee such products as radioactive toothpaste and chewing gum. Of course, the fun is all over when Marie, Pierre, and their fellow scientists start coughing up blood, signaling the awful realities of unchecked radiation.

Screenwriter Jack Thorne (Wonder, The Aeronauts) makes the risky choice of repeatedly flash forwarding to the decades following Curie’s death, reminding us of the world her discoveries created, from the atomic bomb to radiation therapy to Chernobyl. Indeed, at times he literally injects Madame Curie herself into these scenes, a ghostly witness to her complicated legacy. It doesn’t always work — Curie’s life is compelling enough without resorting to a tricked-up narrative — but the ploy does serve to remind us that although the events here unfolded more than a century ago, some of the modern world’s most profound dilemmas harken back to that dusty, irradiated laboratory in pre-World War I Paris.

In any case, all is forgiven whenever Pike is on the screen. History is always more fun when filmmakers leave the rough edges intact, and Radioactive does just that — thanks mainly to the superb work of an actor who thrives on showing those edges in stark, supremely human, relief.

Featured image: Rosamund Pike in Radioactive (Photo Credit: Laurie Sparham; StudioCanal/Amazon Studios)

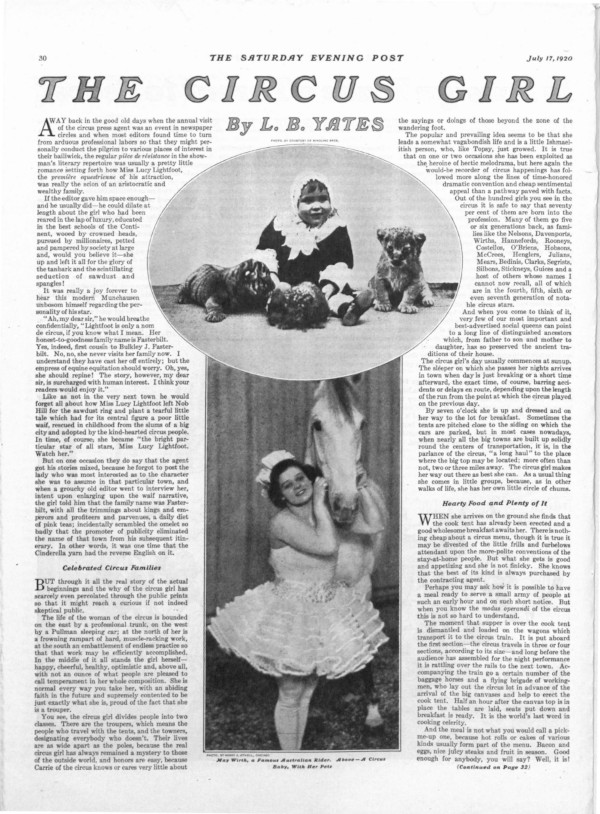

100 Years Ago: The Women Who Ran Off with the Circus

In a time before television or Twitter, the traveling circus was a dominant, all-American form of entertainment. The young and old, rich and poor alike would gather to witness the exotic animals and superhuman acrobatics under the big top each summer. Traveling circuses would shut down entire towns with their parades and engulf the country in “sawdust and spangles.”

The larger-than-life showmen behind the country’s great circuses, like P.T. Barnum, James Bailey, and the Ringling Brothers, are still household names, but many of the performers, daredevils, and animal trainers who astounded their audiences and made weekly headlines have been forgotten.

Part of the appeal of circus folk lay in the mystery surrounding them. Who were they and where did they come from, these people who descended on the most provincial towns with strange talents and glamorous costumes? At the turn of the century, the circus spotlight began to shine on women, highlighting their athletic talents juxtaposed with dainty beauty.

Historian Janet M. Davis writes in The Circus Age that “In an era when a majority of women’s roles were still circumscribed by Victorian ideals of domesticity and feminine propriety, circus women’s performances celebrated female power, thereby representing a startling alternative to contemporary social norms.”

According to “The Circus Girl,” an article by L.B. Yates that appeared in the Post 100 years ago, the intrigue of the female circus performer was heightened by press agents who sowed fictional accounts to local papers of either some rich society girl or poor waif who had found a calling as a trick horse rider in the circus. It was usually a fabrication, as Yates claimed, since the vast majority of circus performers were born into the profession.

But the treatment of this new crop of female performers, in the press and in the culture, was a tightrope act of sorts. The circus offered a home for some outsiders and castaways and provided independence and adventure for its female stars. For audiences, it afforded titillating, exotic glimpses of the limits of the human body while promising to uphold decency in its ranks.

The women of the American circus during its golden age worked tirelessly to perfect their acts, stealing shows and breaking stunt records at a unique time in history that straddled the old conventions with the new promise of suffrage and feminism.

Lillian Leitzel

“I resent having people come to my tent, stare at me as though I were a freak and then turn away laughing, as if they’d seen some wild animal,” the famous aerialist Lillian Leitzel told the Post in 1920. “They seem to assume that circus people have not got beyond the primitive stage of the cave man and are an aggregation of unlettered louts wholly devoid of the commonest sense of social amenities.”

Leitzel was indeed rich in social amenities, and she was also just plain rich. According to historian Janet M. Davis, the performer was making up to $200 a week in 1917 with Ringling Brothers (worth more than $4,000 in 2020), and it wasn’t uncommon for female circus stars to rake in more than their male counterparts. She had her own train car that contained a piano, and at each stop she would dress in her own private tent. By the 1920s, she was pulling in $500 per week, according to John Culhane in The American Circus.

Known as a brassy primadonna, Leitzel grew up in a Czech circus family and began in the gymnasium at 3 years old. Her signature act was to bound up the rope and hold her hand in a loop while throwing her body up and around, dislocating her shoulder over and over again as she performed her “one-arm planges.” As a percussionist carried on a drumroll, the audience would count her swingovers, one time as many as 249.

“Accidents? Oh, well, they’re liable to happen any time,” Leitzel told the Post. “But I never think of that. Whenever a performer gets to studying about the chances he or she is bound to take, he has outlived his usefulness. An acrobat must have hundred per cent nerves.”

She entertained other elite performers, senators, businessmen, and especially children in her private car. She was known for giving parties for all of the circus children and lavishing gifts and sweets on them, possibly because she was stripped of a childhood herself, according to Culhane.

Leitzel encouraged women to exercise to stay healthy, in spite of lingering Victorian notions that athleticism made women ugly and manlike. “Down with the corset,” she told newspapers in 1923. “Put a brick in the atrocious garment and hurl it into the Niagara River.” She encouraged women to take up swimming or some other sport they enjoyed, promising “you can eat what you want and work off the energy in exercise.”

Leitzel married the Mexican trapeze artist Alfredo Codona, and their passionate celebrity marriage was rife with jealousy and resentment. When Leitzel traveled to Europe in 1931, Codona went separately with an equestrian with whom he was having an affair. In Cophenhagen, Leitzel was performing her one-arm planges when the brass ring she was holding snapped, and she fell 20 feet onto her head. Although she insisted on continuing her act, her handlers sent her to the hospital. She died the next morning from a concussion.

Mabel Stark

Mabel Stark was working as a nurse — with a stint as a burlesque dancer — when she found a calling to work with big cats in Venice, California around 1911. She stumbled onto the grounds of the traveling Al G. Barnes Circus and became obsessed with Bengal tigers. Stark joined the show, and within a few years she was a big cat trainer. Stark was the first American woman to take up the dangerous profession, let alone to achieve such renown.

Over the years, she became one of the most famous tiger (and lion and panther) tamers in the world, joining the Ringling Brothers in 1920. Newspapers gushed over her unique wrestling act. Stark would roll around with any of her 18 tigers, giving the impression of a perilous struggle.

Although Stark spent most of her time with her cats and treasured them dearly, she never lost sight of the risk of working with tigers. She gave some insight to a Public Ledger reporter in 1923: “The tone of the voice, the determination of it, has a great deal to do with subduing wild animals. Don’t let uncertainty or fear creep into your tones, or you’re gone. A great life, so to speak, if you don’t weaken.”

As with many who work with predator animals, Stark sustained serious injuries throughout her career (according to a profile in the St. Louis Globe-Democrat in 1950). In Maine, she was mauled in the ring and required 378 stitches. In Arizona, she was bitten by a tiger named Nellie and finished her act with a limp left arm. In spite of it all, she continued to defend her fierce friends and the connection she had with them.

Stark’s story ultimately ended in tragedy. After retiring from the circus in the late 1930s, she settled in at an amusement park called Jungleland. Stark was let go for insurance reasons, and then one of her tigers escaped and was shot. The devastated near-80-year-old took her own life.

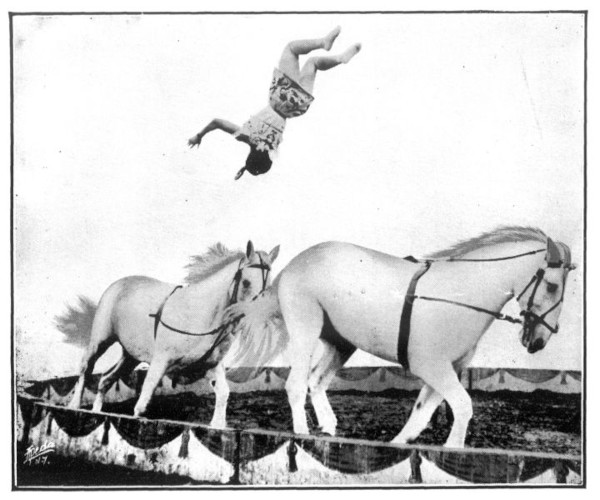

May Wirth

Billed as “The World’s Greatest Rider,” May Wirth was only 17 when she made her first appearance in the Ringling Brothers’ show at Madison Square Garden. The Australian transplant wore a giant hair bow and leapt from horse to horse, completing flips and twists that dazzled audiences and — sometimes moreso — other riders.

Wirth was an orphan who performed as a five-year-old contortionist and was eventually adopted by the down-under circus rider Marilyas Wirth Martin. According to the Braathens, two Madison, Wisconsin circus buffs writing in The Capital Times in 1973, Will Rogers witnessed young Wirth riding in her home country and predicted “the day would come when there would be but two types of bareback riders in the world, May Wirth and all the others.”

When the Braathens asked about her legendary forward somersault, Wirth recalled that Mr. Ringling was skeptical that anyone could complete such a trick, and when she tried it for him: “I missed it and fell on my back right in front of Mr. John. I got up like a streak of lightning, ran and vaulted onto the horse again and did the stunt over for him, this perfectly, and did the somersault feet to feet. That earned me my first season’s contract with John Ringling.”

The sweetheart of the circus made the front page of The New York Times after an unfortunate slip in 1913 caused her to be dragged around the track behind her horse by her feet. Nine years later, the paper reported that she had accepted the challenge of riding a bull, and better yet: “Miss Wirth not only rode the bull, reputed to be a most ferocious beast, 3 years old and weighing 2,400 pounds, but she rode him standing on her hands upon his back.”

Wirth retired from the big top in 1937 and went to live with her mother in New York. She later moved to Sarasota, where the Ringling Museum and Circus Hall of Fame was located. She was inducted in 1964.

Featured image: 1890-1900, Calvert Litho. Co., Library of Congress Prints and Photographs Division Washington, D.C.

The Circus Age by Janet M. Davis

The American Circus by John Culhane

Women of the American Circus, 1880-1940 by Katherine H. Adams and Michael L. Keene

Considering History: Sophia Hayden and the Hidden Cost of American Sexism

This series by American studies professor Ben Railton explores the connections between America’s past and present.

Late last week, Massachusetts Senator Elizabeth Warren ended her bid for the Democratic presidential nomination, leaving the primary without any of the ground-breakingly high number of women who had once been part of its slate of candidates. (Hawaii Congresswoman Tulsi Gabbard remains in the race but has received only two delegates and has never polled above low single digits.) While Warren’s campaign and exit were both influenced by a number of factors, her departure has occasioned numerous commentaries on the continued, frustrating reality (particularly when compared to most other nations in the world) that the United States has never elected a woman to its highest political office — a reality particularly worth engaging on the occasion of Women’s History Month.

The presidency is a strikingly visible element of American society, making the historic and continued absence of women likewise quite apparent. But that absence also reflects a far wider and deeper, and yet often more difficult to spot, aspect of our collective histories: the ways in which sexism and the glass ceiling have driven so many of our most talented and impressive women out of their chosen professions, leaving our entire society profoundly diminished as a result. Few American figures and stories encapsulate that hidden cost of sexism better than the architect Sophia Hayden Bennett (1868-1953).

Hayden was born in Santiago, Chile, to a Chilean mother (Elezena Fernandez) and an American father (George Hayden), a dentist who had moved to Chile from his native Boston a few years earlier. When she was six, her parents sent her back to the Boston area by herself, to live with her grandparents in Jamaica Plain and attend school. While studying at West Roxbury High School she became interested in architecture, and she would go on to attend MIT, graduating in 1890 as one of the first two female graduates of a collegiate architecture program in American history (her classmate, Lois Lilley Howe, with whom Hayden shared a drafting room at MIT, was the second).

Despite that prestigious degree, Hayden was unable to find an apprentice position at any local architecture firms and took a job teaching mechanical drawing at the Eliot School, a vocational grammar school in Boston. But less than a year later she learned of a strikingly unique new opportunity: the chance to design the Woman’s Building, one of the planned exhibition halls for the 1893 World’s Columbian Exposition in Chicago. After extensive negotiation, women’s rights groups had convinced the exposition directors to create a Board of Lady Managers comprised entirely of women, and in February 1891 that board, headed by the socialite and activist Bertha Honoré Palmer, announced a competition for the Woman’s Building design, open only to female architects.

The competition’s $1000 prize/commission was significantly less than what was offered to male architects for the exposition’s other buildings, and so Louise Blanchard Bethune, considered the first professional female architect in America, refused to submit a concept. But Hayden did submit, and out of the 13 proposed designs it was her innovative concept, based in part on concepts from Italian Renaissance classicism, that the Board (along with Chief of Construction Daniel Burnham) selected as the winner. She traveled to Chicago to begin work on turning that design into construction plans as quickly as possible, as construction needed to begin in the summer of 1891 to be ready for the exposition’s May 1893 opening.

That hugely expedited timeline was only one of many challenges that Hayden would face over the next two years. Despite having chosen Hayden’s design, the Board of Lady Managers identified a number of perceived shortcomings and requested many changes, including the addition of an entirely new third story, which required Hayden to overhaul many other aspects as well. Moreover, Board Chair Palmer decided to take control of the building’s interior design away from Hayden entirely after the architect resisted some of the Board’s other substantial proposed changes. Hayden managed to respond to, complete, and work around such extraordinary requests within that very tight schedule and on budget to boot, and the Women’s Building was formally introduced at the exposition’s October 21, 1892, dedication ceremony. But shortly after, it was reported that Hayden suffered a possible nervous breakdown (in some reports referred to as “melancholia”) in Burnham’s office, and she was confined to a “rest home” for months in order to recover.

It’s impossible to know precisely the role that Hayden’s gender played in all those developments, although it’s certainly important to note that such medical diagnoses and conditions themselves were hugely gendered in the late 19th century (as illustrated by the long history of the illness known as “hysteria,” as well as related contexts like physician S. Weir Mitchell’s popular “rest cure” for women and the depiction of its destructive effects in Charlotte Perkins Gilman’s autobiographical short story “The Yellow Wallpaper”). Moreover, many of the prominent responses to her design were overtly limited by narratives of gender, as illustrated by architect and critic Henry van Brunt’s argument (as part of a long series entitled “Architecture at the World’s Columbian Exposition”) that the building seemed “rather lyric than epic in character, and [that] it takes its proper place on the Exposition grounds with a certain modest grace of manner not inappropriate to its uses and to its authorship.”

Although Hayden was “cured” in time to leave the rest home and attend the exposition before its November 1893 closing (after which the Women’s Building, like most of the exposition’s structures, was demolished), she would as far as we know never design another building. An editorial in the journal American Architect lamented that fate while managing to reinforce sexist narratives at the same time, writing, “Miss Hayden has been victimized by her fellow-women. The unkind strain would have been the same had the work been as unwisely imposed upon a masculine beginner.” Hayden returned to the Boston area, eventually marrying local painter William Bennett in May 1900. Their wedding announcement in the Boston Daily Advertiser noted that “both are highly esteemed and respected . . . versatile as well as talented,” but again as far as we know Hayden never again publicly employed her prodigious talents, living her remaining six decades as a private person in their Winthrop, MA home.

What might American architecture, America’s cities, American society have looked like if Sophia Hayden had continued to design buildings? What would American history look like if we had elected a woman president by now (or if women had received the vote before 1920, for another example)? Such questions remain painfully hypothetical and unknowable, reflecting a collective loss that parallels the individual and personal costs of sexism so embodied by a figure like Sophia Hayden.

Featured image: The 24 female MIT students in 1888. Sophia Hayden is in the front row, far left. (MIT Museum)

Memories of a Women’s College in 1942

Today, it’s a time capsule. A Sinatra record playing on my portable record player as I studied for an exam in U.S. History, the little known “Night We Called It A Day.” Still a favorite. There was a moon out in space/But a cloud drifted over its face/You kissed me and went on your way/The night we called it a day.

The place was my dorm room at Stephens Junior College for Women in Columbia, Missouri, in 1942.

It was a time when men weren’t allowed on dorm floors above the entry lobby except the day of arrival or departure, for hellos and goodbyes and moving trunks. That included fathers. Brothers. Fiancés. It mattered not. And it was standing policy that if you were caught (even seen) in a car with a member of the opposite sex without written proof from home that he was your brother or fiancé, you were subject to immediate and automatic expulsion.

When you went into town for dinner or over to the University of Missouri to see the latest student play, you had to sign out … and back in, a counselor at the door that would be locked (depending on your year) at 10:00 or 11:00.

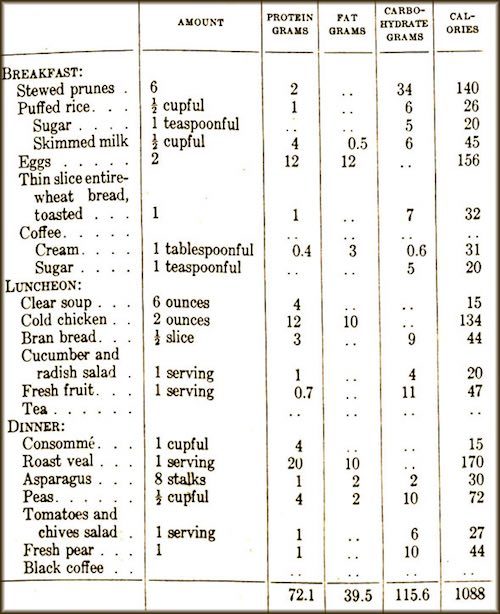

My first year, my roommate was from the Lone Star State. We quickly dubbed her “Texas.” Her father had a grocery store, and her packages from home came in big, big, big boxes. One of the first things I learned in college was that Hershey bars come packaged in boxes of 24 — not a good thing for me. (One of the special offerings of Stephens College was a Diet Table, for those who could lose a few pounds while gaining a wealth of new knowledge.)

The social life of Stephens revolved around the “Blue Rooms” — areas in dorms where we gathered to smoke, because we weren’t allowed to smoke in our rooms or anywhere else on campus. The Blue Rooms also had soft drinks and various snacks, as well as light lunches.

I particularly remember my visits the Sunday afternoons following our trips to town to see the latest movie. One time, it was Now, Voyager, and when I walked into the Blue Room I frequented near my dorm it was obvious who’d also just seen the film — the girls who were lighting two cigarettes and handing one to a friend, as Paul Henreid had done in the film. One of the more famous moments in his romance with Bette Davis, handing Davis the second cigarette.

Another time, everyone was singing, “You must remember this, a kiss is just a kiss, a sigh is just a sigh ….” But not as well as Dooley Wilson in Casablanca.

I don’t remember any war movies then. But the war was a factor in my being there. Stephens was the choice my parents decided upon (principally, my mother) because I was just 16 when I graduated from high school and the war had started. She apparently foresaw that college life would no longer be a Good News movie; indeed, universities would become training centers for the U.S. Armed Services — Army and Navy Air Force pilots and bombardiers — and she liked Stephens’ approach to female education.

Its president, James Madison Wood, felt that while women were entitled to an education — equal, in principle, to that of men — they had special needs and interests. He addressed this with the creation of a group of clinics for the Stephens girls. The clinics were highly publicized, perhaps because of the novel approach or perhaps because of the nature of some of the clinics.

The Marriage Clinic drew my second year roommate, who left school to marry her hometown sweetheart, then stationed at a Naval Air Training base. There was a Budget Clinic, to which my father kept pointing me. At a Fashion Clinic, I had a formal designed for me. And a Glamour Clinic — the one that had gained the most national attention. The one to which Stephens girls would go to learn how to make the most of their physical attributes: hair, make-up, lipstick colors.

My mother, for whom the Glamour Clinic was an added attraction to the other lures of Stephens, wanted me to get an appointment shortly after classes started. But, what with one thing and another — getting textbooks, settling in to classes — I had not made an appointment. Then, word spread about those who had.

They came back with their hair cut!

If that doesn’t sound like much today, it was the kiss of death then. We wore our hair long. Mine — even with the upturned curl of an incoming wave — grazed my shoulders. No way was I going to go near a place that wanted to cut my hair! And so I resisted my mother’s increasingly persistent questions in her letters as to when I had an appointment at the Glamour Clinic. That was an important feature she’d noticed in the school catalogue. Why didn’t I take advantage of all my opportunities at Stephens?

As the time for Christmas break approached, I felt I could not go home without going to the Glamour Clinic. It meant so much to her. Could I make clear I didn’t want my hair cut? So I went to make an appointment. The only one available was a Saturday in December. I booked it, not realizing it was also the Saturday of Hell Day for sorority pledges.

We were told by our sororities what costume to prepare and wear that day. And we would march around the main part of campus after lunch in our costumes. As with all things then, World War II affected Hell Day.

My fellow pledges and I were Flying Tigers, the name of a group of American volunteers who fought the Japanese in the China Burma Theatre before the Japanese bombed Pearl Harbor.

To become a Flying Tiger in Columbia, Missouri, I went into town and bought a suit of men’s long underwear, a package of Rit dye — orange, obviously — and a packet of black crepe paper. I duly dyed the suit and sewed strips of black crepe paper to simulate stripes. I braided some of the strips of black crepe paper for the tail. I made a propeller from the cardboard at the back of a notebook pad and, later, stuck the circular center to my forehead, also front and center, the blades vertical for visibility.

On that December morning I dressed in said outfit and headed across campus to Senior Hall, where all pledges were to dine that morning. Then I returned to my room, ready to head out not to a Saturday class but my appointment at the Glamour Clinic.

I appeared in the doorway as a faculty-type lady opened up for the day. I might be there for my mother but no way was I having my hair cut. Jaw set, words as emphatic as it was in my power to make them, I said, more proclamation than statement: “I won’t let you cut my hair!”

Something about the reaction of the Glamour Clinic lady made me realize that was not my problem. The frozen smile was a clue.

The faux Flying Tiger before her was not only eye-catching for starters, I was all the more noticeably overweight in the suit of men’s long underwear dyed a Technicolor-bright orange. I bulged in all the wrong places. Corrugated cellulite comes to mind. Sometimes the bulges synchronized with my tiger stripes, sometimes not. The wet snowfall had loosened my propeller, not securely stuck to my forehead, and it fell off. When I bent to retrieve it, my tail caught on something and almost came off. I quickly secured it.

Suffice to say, I went through the routine/procedure/protocol of the Glamour Clinic, which was designed to help me learn how to make the most of my appearance, in record time.

I don’t remember a thing until the end, when the Glamour Clinic lady had me seated at one of those basins where you get your hair washed at beauty parlors. I was in the chair, tipped back, head in the big metal tray, as she talked about my eyebrows. She may have plucked one or two stragglers. I just remember she was talking about my eyebrows when a voice was heard in the doorway. I say voice because I was flat on my back and the Glamour Clinic lady had her back to the door.

“I know I’m early, but ….”

“No. No,” said the Glamour Clinic lady. “Come right in.”

It would be hard to imagine a more sincere welcome. The Glamour Clinic lady’s heartfelt Thank you! to a merciful god for her deliverance.

No need to imagine the rest. She snapped me up to a sitting position with a speed that it’s a wonder my eye balls aren’t still spinning.

When I looked to the doorway, I understood the silence that followed. Settled over the otherwise empty expanse of the Glamour Clinic.

A speechless silence.

The next appointment was having trouble getting in.

She had outdone my flying tiger. With the help of some well-shaped cardboard and gray paint, she was a battleship.

Returning to my room, I sat down at my desk, pulled out a piece of stationery, and, pen poised, began.

Dear Mother,

About my appointment at the Glamour Clinic ….

Featured image: College women playing bridge, 1942 (Wikimedia Commons)

Susanna White Winslow: Pilgrim First Lady

The Pilgrims were a central part of Thanksgiving when I was a child, and well into my adulthood. The Pilgrims offering thanks for a bountiful harvest that first fall in the New World — in October 1621, not today’s November — were the centerpiece of the holiday.

It’s different now.

When Thanksgiving rolls around each November, the Pilgrims have so faded from our history that their story might have been written in invisible ink.

If you think 1620 is so long ago — who cares when the Bears are kicking off against the Lions — let me tell you about one of them, especially appropriate this year, when women have been so much in the news.

Susanna Jackson White.

She was one of the passengers on the Mayflower when it sailed out of Plymouth Harbor in England on Sept. 16, 1620. It was a small ship, by today’s standards, 90 feet long and 27 feet wide. A tennis court is 78 feet long, 26 feet wide (for singles play). The Pilgrims didn’t have assigned cabins. They crossed in the cargo area … because they were the cargo. And a larger one than intended. The Pilgrims had started out with two ships, but the second, the Speedwell, developed a leak after sailing — not just once, but twice — and they had to return to England. After the second return to port, almost 40 of the Speedwell passengers were added to the original 65 on the Mayflower. The shortage of food, the rigors of a crossing so rough that one storm caused the ship’s pitching to crack a main beam, must surely have been the more difficult for Susanna, who was seven months pregnant.

The bad weather persisted after they reached America, sighting the “hook” of present-day Cape Cod. They tried repeatedly to sail south to their original destination, the Colony of Virginia, but the winter weather and rough seas forced them back to Cape Cod. The delays in sailing, the weather that then kept them from going farther south, meant they would have to set up their settlement in the New World in New England.

While the ship lay at anchor off Cape Cod at what is now Provincetown, Susanna’s husband, William White, on November 21, 1620, with the 40 other men signed the Mayflower Compact — the document that envisioned a government of laws, not men, a government that took its consent from the governed. The document that is considered by many to be the keystone of the Declaration of Independence and the Constitution of the United States.

While the ship lay at anchor, Susanna also produced her contribution to history: the first English-born child in New England, a son named Peregine.

It took a month for the Pilgrims to find a site for their settlement, a cleared area that had once been an Indian village on a good harbor. December 20, the Mayflower dropped anchor at Plymouth. Whether or not they actually stepped out on Plymouth Rock when the Mayflower shallop (think lifeboat or really big rowboat) reached the beach is not clear. Historians dismiss it, even the story that has come to be legend for some, that the first to do so was Mary Chilton, 12. But anyone who has travelled with children constantly asking, “Are we there yet?” can easily imagine her eagerness to jump out, rock or not.

To give the families safe shelter aboard ship while they built their houses ashore, the captain of the Mayflower delayed his return voyage to England. But the days were cold. New England winter cold. And after the long voyage on short rations, a “General Sickness” began to take its toll. William White was one of the 51 who died that first winter — February 21, 1621. The loss to Susanna, left alone with a 5-year-old son and new baby, was compounded by the danger inherent in their steadily shrinking number; indeed, to keep the Indians from knowing how few there were, the dead were buried at night, in a common grave that was not marked.

With the arrival of spring and warmer weather, the captain of the Mayflower made preparations to sail. He offered to take with him anyone who wished to return to England. Although 51 of the 102 had died — literally, half — not one went back.

Still, one can only begin to imagine Susanna’s feelings as she stood on the desolate shore, even the Whites’ two servants dead, her small son at her side and six-month-old baby in her arms, watching the ship sail away.

Leafing through an old book some years ago — an old-fashioned book printed on paper so thick it might have been used for Tiffany Christmas cards — I came across an illustration. The full-page drawing, with a decidedly romantic quality, as I remember it, showed Susanna looking up, to see Edward Winslow, whose wife had died March 24, watching not only the departing ship but Susanna. Whether this has any basis in fact, I know not. I do know Susanna White and Edward Winslow were married May 12th.

Anyone feeling the marriage showed undue haste should remember that the society as well as the settlement was built around the family; hence, in an age when death was a commonplace event, it was not unusual for widows and widowers to remarry quickly. Just take a stroll through an old cemetery.

The wedding of Susanna White and Edward Winslow made Susanna the first English bride in New England.

The spring also marked better times. A treaty of peace was negotiated with the local Indian Chief Massasoit. And Squanto, a Native American who spoke English because he’d lived in England (long story), taught the Pilgrims how to use locally caught fish to fertilize the land and how to plant corn … five kernels. (One for the blackbird, one for the crow, one for the cutworm, and two to grow.)

That fall, “Our harvest being gotten in,” as Edward Winslow put it, “our governor sent four men on fowling, that so we might after a special manner rejoice together.” The 90 Indians who came contributed five deer. Susanna, one of four adult women to survive the first year, presumably was there, perhaps basting one of the fowl.

When Edward Winslow, long a leader of the colony, became the Governor of the Plymouth Colony, serving in 1633-1634 — also, 1636-1637, 1644-1645 — Susanna became the First Lady of the colony.

In 1633 it was a much larger colony, land grants having been awarded in late 1627. Although it is not known exactly when they did so, Myles Standish and John Alden — of Henry Wadworth Longfellow fame and countless grade school Thanksgiving pageants — moved north to Duxbury. The Winslows went on to Green Harbor in what is now the town of Marshfield, where they built a handsome residence, “Careswell,” named for a family seat of Winslow’s in England. Their move north was prompted not only by the granting of land but by the fast-growing Massachusetts Bay Colony at Boston, which offered a market for the cattle they could raise.

The growth of the Massachusetts Bay Colony signaled the overshadowing of the Plymouth Colony. By 1643, Plymouth had joined the New England Confederation. Josiah Winslow, the first of Susanna and Edward Winslow’s children who lived to adulthood, followed in his father’s distinguished footsteps. Educated at the new Harvard College, he served as the Plymouth Commissioner to the Confederation.

During one of Edward Winslow’s trips to England on behalf of the colony, he was appointed by Oliver Cromwell to head an expedition against the Spaniards in the West Indies in 1655. He came down with fever on the voyage and died May 8, 1655.

Susanna and Josiah were mentioned in his will. The fact that Edward Winslow made no provision for Susanna in London, where he was living at this time, leads historians to conclude that she had remained at their home in Marshfield, Mass. And was still living.

Josiah Winslow continued to follow in his father’s footsteps.

When in 1673 he became governor of the Plymouth Colony, Susanna became the mother of the first native-born governor of any of the American colonies.

Josiah died December 18, 1680, in Marshfield. Because he made no mention of his mother in his will, which was dated July 2, 1675, it is assumed she was dead.

As there is no record of the year of Susanna’s death, there is no record of where she is buried, although it is thought she rests in the Winslow cemetery in Marshfield.

A year or so ago, though, I chanced upon the possibility that she is actually buried in the Old Granary Burying Ground in Boston. Laid out in 1660, originally part of the Boston Common, it is a popular site on The Freedom Trail today, for it is the final resting place of the victims of the Boston Massacre and three signers of the Declaration of Independence — John Hancock, Samuel Adams, and Robert Trent Paine — not to mention the parents of Benjamin Franklin. If Susanna is buried there, 150 years later, give or take — May 1818 — the empty grave to one side of her final resting place was occupied by another individual who has a place in our history: Paul Revere.

Although this cannot be substantiated, I pass it along, because there is a grave next to Paul Revere that has no gravestone. And John Endicott, the first governor of the Massachusetts Bay Colony, who died March 15, 1665, and was thought for more than 300 years to have been buried elsewhere, is actually in the Old Granary Burying Ground. His gravestone had been destroyed.

Why not Susanna’s?

Why not Susanna in the historic old cemetery on the Freedom Trail?

- The mother of the first English-born child in New England.

- The first bride in New England.

- The First Lady of the Plymouth Colony … on three occasions.

- The mother of the first native-born governor of an American colony.

And Susanna was just one chapter in the Pilgrim Story.

That deserves a place at the table any day. Particularly, the Thursday each year that is Thanksgiving.

Featured image: “The First Thanksgiving at Plymouth” (1914) By Jennie A. Brownscombe (Wikimedia Commons)

The Heroism of Women in the Boer War

When news came that the Boer women of South Africa were fighting alongside men in their war against the British, the Post applauded.

In war’s long, dreary hours of waiting, the quality of character that can endure quietly represents the very highest bravery that human nature is capable of, and in this greater heroism woman has almost a monopoly.

In their heroism, women are always better than men.

And it’s not only in great things that woman shows her nerve. The other day in Naples, two Boston ladies were leaving a shop. A man seized the purse of one of them, whereupon she took him by the throat, gave him a good shaking, slammed him upon the ground, recovered her property, and then in her cool New England way told him to move on. We can scarcely pick up any newspaper without finding a story of a woman capturing a burglar, stopping a runaway, or doing something of the instant sort that is the very essence of nerve; and we should not forget in this category the Connecticut widow who, although dreadfully afraid of mice, upon finding a lion from Mr. Barnum’s show in one of the stalls of her stable, deliberately whipped the beast away and sent him cowering down the road.

– “The Heroism of Women,” editorial by Lynn Roby Meekins, April 21, 1900.

Featured image: SEPS.

Considering History: The Role of Women in the Lynching Epidemic

This series by American studies professor Ben Railton explores the connections between America’s past and present.

On February 14, Senator Kamala Harris introduced legislation into the Senate that would for the first time in American history make lynching a federal crime. The Justice for Victims of Lynching Act, originally drafted by Harris in June 2018, does more than just criminalize lynching—as its name suggests, it seeks to remember and in some small ways make amends for the thousands of lynchings that took place between the end of the Civil War and the 1960s. “With this bill,” Harris said, “we finally have a chance to speak the truth about our past and make clear that these hateful acts should never happen again. We can finally offer some long overdue justice and recognition to the victims of lynching and their families.”

The horrific stories of lynching are intimately intertwined with African American history, a significant factor in Harris’s choice to introduce the bill during Black History Month. Yet as with any American histories, lynching’s connections extend to every national community. For Women’s History Month, Harris’s prominent role in these unfolding 21st century accounts can also help us remember the fraught, contradictory, and crucial links between American women and the lynching epidemic.

Perhaps the single most jarring defense of lynching was offered by a pioneering feminist activist. Rebecca Ann Latimer Felton, the wife and political partner of longtime Georgia Congressman William Harrell Felton, was one of the Progressive Era’s most prominent and acclaimed women’s rights activists: an advocate of women’s suffrage, equal pay, and many other feminist causes, she became the first woman to serve in the U.S. Senate when, at the age of 87, she was honored with a single-day appointment as Senator from Georgia on November 21, 1922. Yet she was also a white supremacist and racist who openly advocated for the systematic lynching of African Americans.

Felton made her case for lynching most vocally in an August 1897 speech to the Georgia Agricultural Society. While she identified a number of problems facing (white) farm wives in the state, she focused in particular on “the black rapist” and the threat he posed to those women. She repeated the canard that Reconstruction had given African Americans “license to degrade and debauch.” And in response to those imagined terrors, she argued, “When there is not enough religion in the pulpit to organize a crusade against sin; nor justice in the court house to promptly punish crime; nor manhood enough in the nation to put a sheltering arm about innocence and virtue—if it needs lynching to protect woman’s dearest possession form the ravening human beasts—then I say lynch, a thousand times a week if necessary.”

Felton’s bigoted speech reminds us that the era’s progressive white women far too often allied with the forces of segregation and white supremacy, both to further their movement’s goals and (as in Felton’s case to be sure) out of genuine and deeply rooted racism. Yet as historian Martha Jones has recently argued, the under-appreciated contributions of African American women to the women’s suffrage movement played a crucial role in advancing women’s right to vote. One of the most prominent such African American suffrage activists, Ida B. Wells, also happened to be the nation’s leading anti-lynching journalist and crusader.

The opening pages of Wells’s first book, Southern Horrors: Lynch Law in All Its Phases (1892), reflect the intersections of her women’s rights and anti-lynching activism. In her preface, Wells acknowledges the fundraising efforts of New York City women’s rights organizations that allowed her to publish the book, writing, “the noble effort of the ladies of New York and Brooklyn Oct. 5 have enabled me to comply with this request and give the world a true, unvarnished account of the causes of lynch law in the South.” Wells highlights both her own status as a target of white supremacist violence (when her Memphis newspaper office was burned down) and her courageous response to those attacks: “Since my business has been destroyed and I am an exile from home because of that editorial, the issue has been forced, and as the writer of it I feel that the race and the public generally should have a statement of the facts as they exist.” As an African American woman speaking out against these horrors, she likewise revises Felton’s images of race and gender, noting, “[The facts] will serve at the same time as a defense for the Afro-Americans Sampsons who suffer themselves to be betrayed by white Delilahs.”

While reports of lynching usually involved African American male victims, the epidemic also extended to other communities of color, including Chinese Americans and Mexican Americans. A recent New York Times article that highlights the histories of Mexican American lynchings in particular reveals another role for American women activists: as contributors to expanded collective memories. That includes the historians upon whose work that New York Times article depends: Professors Monica Muñoz Martínez of Brown University and Laura F. Edwards of Duke University. But it also includes women like Arlinda Valencia, the Texas educator and union official whose ancestors were among the victims of the January 1918 Porvenir mass lynching in which Texas Rangers and ranchers destroyed an entire Mexican American village.

Professor Martínez’s educational nonprofit organization Refusing to Forget has in the half-dozen years since its founding done particularly impressive work recovering those histories and sharing them with audiences of all types. Those efforts include historical markers for particular sites such as Porvenir, traveling and permanent museum exhibitions, and public lectures and conversations. Martínez and her colleagues have discovered a pattern of widespread violence directed not only at individual Mexican Americans, but also and especially at entire communities, with the Porvenir massacre sadly not atypical of these outbursts of collective brutality.

Like Senator Harris, these scholarly and civic historians are working to make the lynching epidemic’s histories more consistently present in our 21st century collective memories. Doing so likewise requires remembering lynching’s complex intersections of race and gender, both in their most destructive and most inspiring forms.

Featured image: The Silent Parade in 1917 in New York City was organized by the NAACP to protest violence toward African Americans. (Library of Congress)

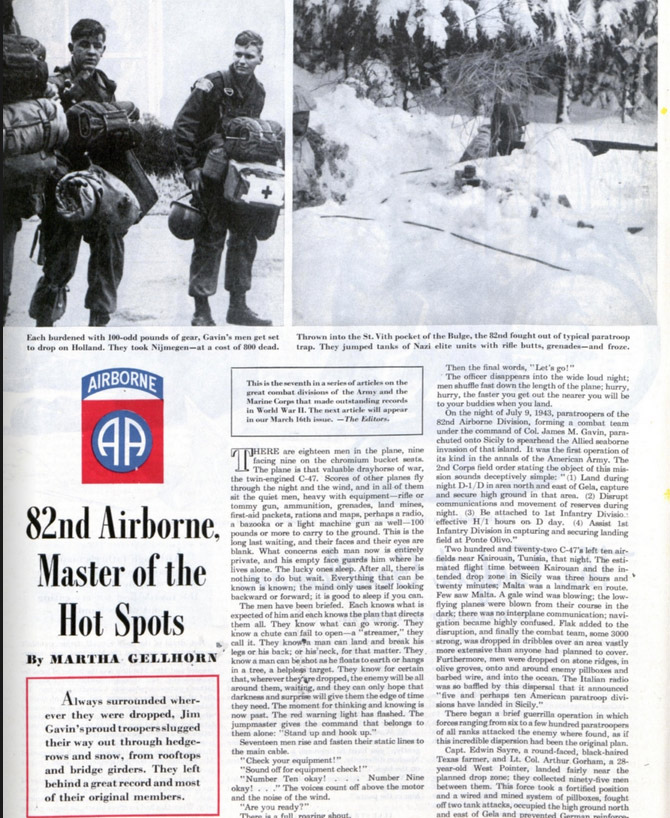

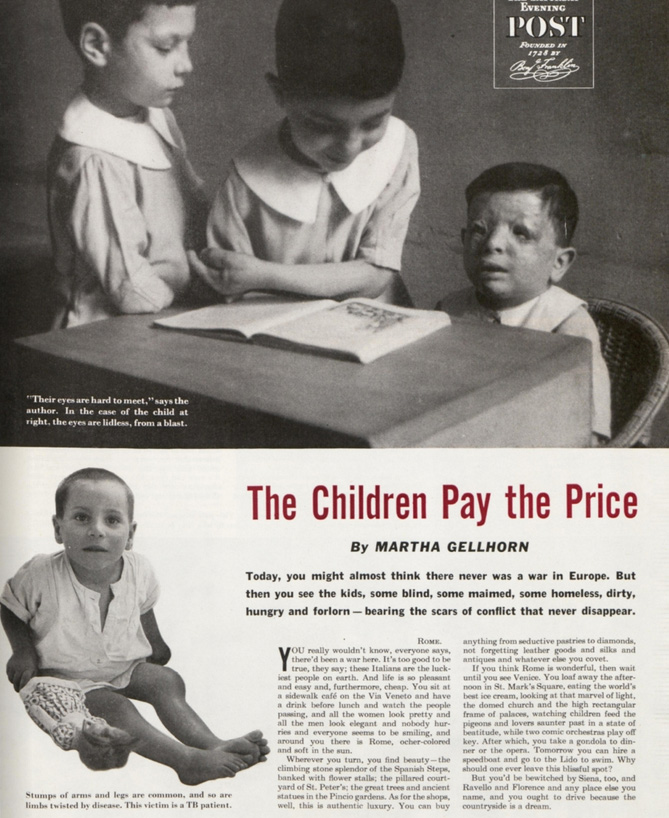

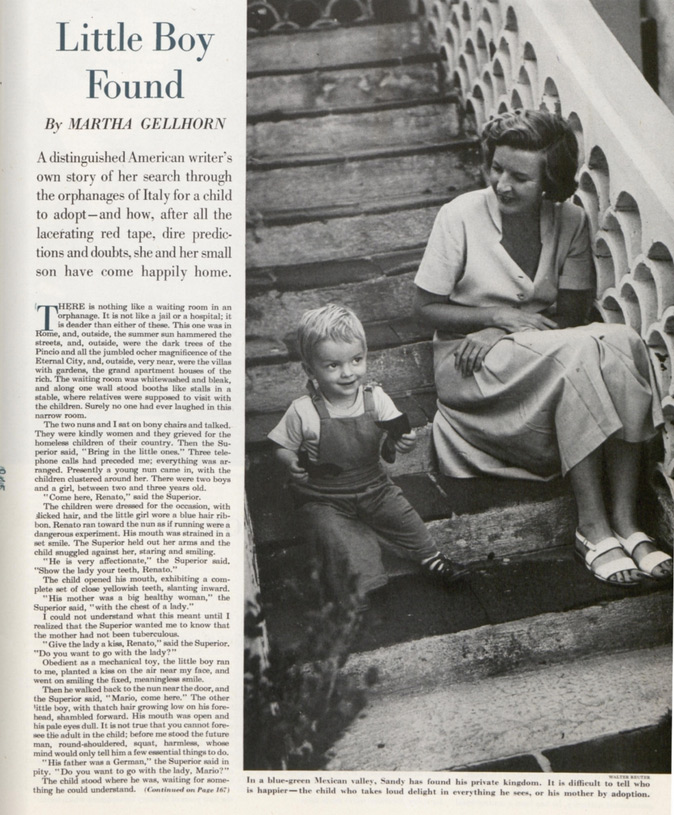

The Female War Correspondent Who Sneaked into D-Day

At the Democratic National Convention in St. Louis in 1916, Martha Gellhorn stood in a protest called the Golden Lane. She was only 8 years old, but her mother, a suffragist, had organized 7,000 women clad in yellow sashes and parasols to line Locust Street — the route delegates would take to the St. Louis Coliseum. Toward the end of the line, seven women in white represented states that endorsed women’s suffrage, and many others in gray and black stood for states unwilling to budge. Gellhorn, in a white dress, represented a future woman voter.

This initial act of defiance was only the beginning for Gellhorn. The young girl in white would go on to lead a daring and adventurous career. Throughout her life, as a war correspondent and author, she witnessed some of the most influential occurrences of the 20th century, from the Great Depression to D-Day to the U.S. invasion of Panama. From her feminist start in St. Louis, Gellhorn travelled the world and became one of the most important journalists of the century. And she did it all without permission.

Like many writers and artists of the time, Gellhorn moved to Paris in 1930. After dropping out of Bryn Mawr College and working briefly for New Republic, the young reporter was itching to become a foreign correspondent. Gellhorn landed a job with the United Press, but was fired after reporting sexual harassment by a man connected with the agency. She spent many years travelling Europe, writing for papers in Paris and St. Louis, and even covering fashion for Vogue. While noticing the rise of fascism in Germany, Gellhorn remained a pacifist in mostly leftist circles. She decided to return to the States in 1934 because, as she said, the poverty she witnessed in Europe was rampant in her own country.

Gellhorn soon earned a spot as an investigator in Roosevelt’s Federal Emergency Relief Administration, travelling around the country and giving firsthand reports of the grim living and working conditions. She trudged around North Carolina in secondhand Parisian couture, interviewing five families a day and growing ever more troubled about the widespread destitution. Gellhorn called on Roosevelt to do more about a syphilis epidemic and lack of birth control. Her boldness gained her the ear of Eleanor Roosevelt, who became a lifelong friend.

While on assignment in Idaho, Gellhorn convinced a group of workers to break the windows of the FERA office to draw attention to their crooked boss. Although their stunt worked, she was fired from FERA: an “honorable discharge” for being “a dangerous Communist,” she wrote wryly to her parents. The Roosevelts then invited Gellhorn to live at the White House, and she spent her evenings there assisting Eleanor Roosevelt with correspondence and the first lady’s “My Day” column in Women’s Home Companion.